In the high-stakes, hyper-kinetic arena of digital performance marketing, budget is the lifeblood, and efficiency is the pulse. Yet, for many enterprise-level advertisers, that pulse is often erratic, suffering from silent arrhythmias that bleed thousands of dollars before a human analyst even finishes their first espresso. We are living in an era where “setting and forgetting” a Google Ads or Meta campaign is less of a strategy and more of a financial suicide pact. Enter the silent sentinel: real-time anomaly detection.

The premise is deceptively simple: identifying patterns in data that do not conform to expected behavior. However, the execution is a masterclass in statistical sophistication. In the context of paid media, anomaly detection isn’t just about spotting a sudden spike in spend; it’s about the surgical identification of bot-driven click surges, broken conversion tracking, seasonal deviations, and the dreaded “fat-finger” manual entry error. This guide explores the analytical frameworks and technical architectures required to transmute raw ad data into a fortress of fiscal responsibility.

The Anatomy of an Ad-Tech Catastrophe

To understand the “why” of anomaly detection, one must first confront the “how” of budget waste. Digital advertising is a fragmented ecosystem, prone to entropy. Waste typically manifests in three distinct flavors, each more insidious than the last.

1. Sophisticated Invalid Traffic (SIVT)

Unlike General Invalid Traffic (GIVT)—which includes basic search engine crawlers—SIVT is designed to mimic human behavior. We are talking about sophisticated botnets that move cursors, pause to “read” content, and even complete lead forms with stolen PII (Personally Identifiable Information). Without real-time monitoring, your Cost Per Acquisition (CPA) might look phenomenal while your actual sales pipeline remains a barren wasteland.

2. The “Broken Pipe” Syndrome

Tracking pixels are fragile. A minor update to a website’s Header Tag Manager or a shift in cookie consent strings can instantly sever the link between an ad click and a conversion. When the algorithm loses its feedback loop, it begins to “hallucinate,” often over-bidding on low-quality traffic because it can no longer distinguish between a bounce and a buy. Real-time detection flags the divergence between click volume and conversion signals within minutes, not days.

3. Algorithmic Runaway

Modern bidding strategies like Target ROAS (Return on Ad Spend) or Maximize Conversions are black boxes. Occasionally, a statistical outlier—perhaps a single high-value purchase from an unrepresentative user—can skew the algorithm’s perception of reality. It may then aggressively pursue similar (yet ultimately non-converting) profiles, burning through the monthly budget in a frantic, misguided quest for more outliers.

The Mathematical Framework: Moving Beyond Simple Thresholds

If you are still relying on static alerts—such as “Notify me if spend increases by 20%”—you are bringing a knife to a quantum physics fight. Static thresholds are the enemies of nuance. They ignore seasonality, day-parting trends, and the inherent volatility of the auction environment. True anomaly detection leverages advanced statistical modeling to create a dynamic “envelope” of expected behavior.

“The difference between a trend and an anomaly is often found in the residuals of a time-series decomposition. If the noise starts singing a melody, you’ve got a problem.”

Bayesian Structural Time Series (BSTS)

BSTS models are particularly adept at handling the “marketing mix” problem. By decomposing a time series into trend, seasonality, and regression components, these models can predict what a metric *should* look like in the absence of an intervention. When the actual data deviates significantly from this counterfactual prediction, an anomaly is triggered. This is particularly useful for distinguishing between a legitimate holiday surge and a bot-induced spike.

The Isolation Forest Algorithm

Borrowing from the world of cybersecurity, the Isolation Forest is an unsupervised learning algorithm that identifies anomalies by isolating observations in a data set. Because anomalies are “few and different,” they are easier to isolate than normal points. In a high-dimensional space where you are tracking CVR, CTR, CPC, and Impression Share simultaneously, the Isolation Forest can detect multidimensional shifts that would be invisible to a human looking at a flat spreadsheet.

Z-Score and Standard Deviation

For those just beginning their journey, the Z-score remains a foundational tool. By calculating how many standard deviations a data point is from the mean, you can quantify “weirdness.” However, in the context of paid ads, a “Rolling Z-Score” is required to account for the fact that your “normal” mean is constantly evolving as the market fluctuates.

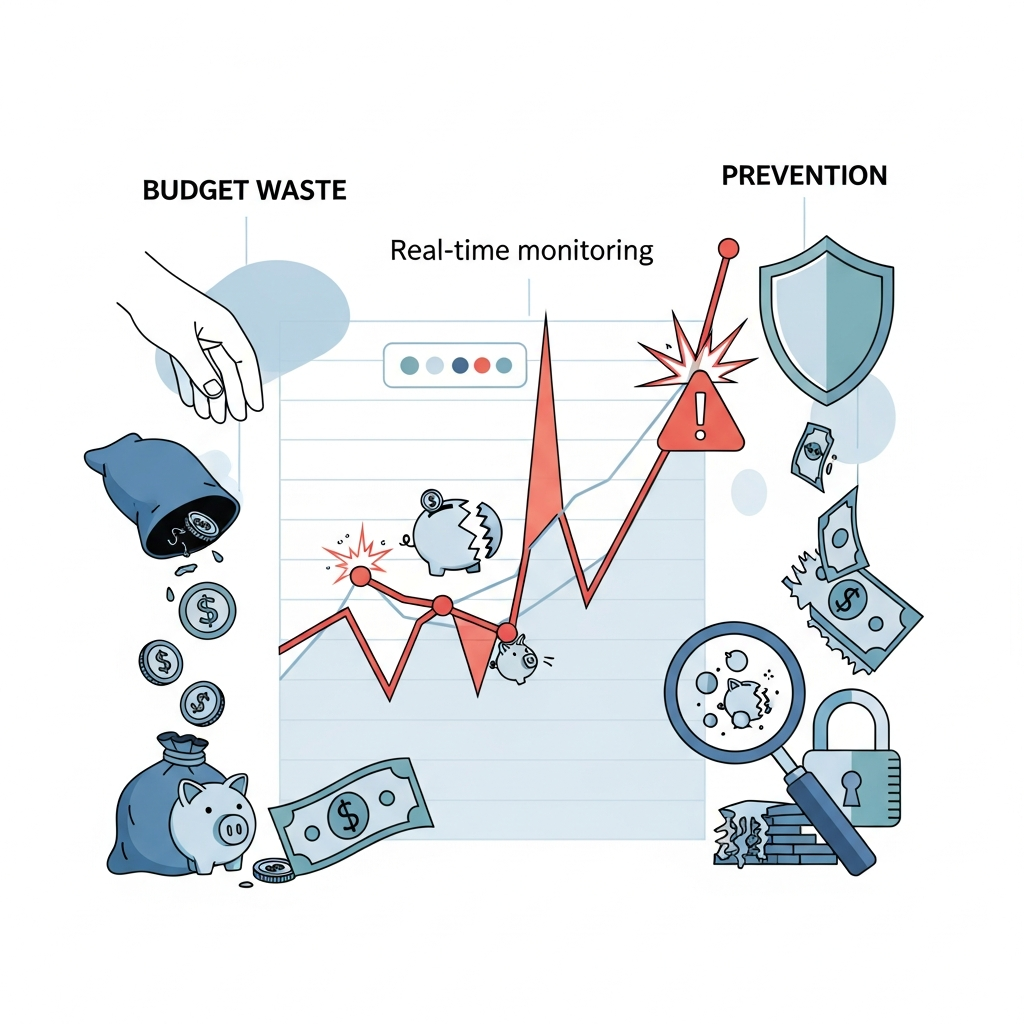

>Real-Time vs. Reactive: The Cost of Latency

In the world of high-frequency trading, milliseconds equal millions. Paid advertising is increasingly mirroring this reality. Most native platforms provide data with a 3-hour to 24-hour latency. Relying solely on the Google Ads UI to catch a budget bleed is like trying to put out a house fire with a report that arrived in the mail the next day.

Real-time monitoring requires an independent data pipeline. By hooking into APIs (Application Programming Interfaces) and streaming data into a centralized warehouse (like BigQuery or Snowflake), advertisers can run custom scripts every 15 minutes. This reduces the “Mean Time to Detection” (MTTD) from half a day to a quarter of an hour. The ROI here isn’t just about saving the wasted spend; it’s about the opportunity cost of the reclaimed budget.

- Immediate Automated Pausing: If spend velocity exceeds 500% of the hourly norm, scripts can automatically pause the campaign, pending human review.

- Negative Keyword Injection: Real-time detection can identify a surge in irrelevant search queries and add them as negatives before the next 10,000 impressions are served.

- Bid Capping: During periods of unexplained CPC inflation, an automated system can enforce a temporary bid cap to protect the margin.

>The Human Element: Dealing with “False Positives”

One cannot discuss anomaly detection without addressing the boy who cried wolf. An overly sensitive system will bombard your Slack channels with alerts every time a celebrity mentions a related keyword on Twitter or a competitor goes dark. This leads to “alert fatigue,” where practitioners start ignoring the very system designed to protect them.

To mitigate this, sophisticated systems employ a Human-in-the-Loop (HITL) feedback mechanism. When an anomaly is flagged, the analyst shouldn’t just “resolve” it; they should categorize it. Was it a “True Positive” (actual fraud/error) or a “False Positive” (explainable market shift)? This feedback is fed back into the machine learning model to refine the “expected behavior” envelope, making the system more resilient and intelligent over time.

>Implementing Anomaly Detection: A Step-by-Step Blueprint

For the elite marketer, implementation is a three-tier process. It requires a synergy of engineering prowess, statistical rigor, and domain expertise.

Phase 1: Data Aggregation and Normalization

The first hurdle is the “silo” problem. Your Google Ads data doesn’t talk to your Shopify backend, and your Meta Ads data is off in its own walled garden. You must centralize this data. Use an ETL (Extract, Transform, Load) tool to pull raw, hourly data into a cloud environment. Crucially, ensure your data is normalized—adjusting for time zones and currency fluctuations—so that you are comparing apples to apples.

Phase 2: Defining the “Baseline of Sanity”

Don’t try to track everything at once. Start with the “Golden Metrics”:

- Spend Velocity: The rate of budget consumption per hour.

- Conversion Lag: The delta between a click and a recorded conversion.

- CPC Volatility: Unexplained jumps in the cost of an auction.

- CTR Anomalies: Unexpectedly high click-through rates that suggest bot manipulation.

Phase 3: Automation and Remediation

An alert is only as good as the action it triggers. Use tools like Python (with libraries such as Pandas and Prophet) to run your detection scripts. Connect these scripts to your communication stack (Slack, PagerDuty, or Email) and, ideally, back into the Ad Platform APIs to execute changes automatically.

>Case Study: The $50,000 “Fat Finger” Saved by a Script

Consider a global B2B SaaS company that recently expanded into the EMEA market. A junior account manager, while adjusting bids for a specific campaign, accidentally set the “Daily Budget” to what was intended to be the “Total Monthly Budget.” In the high-volume environment of broad-match keywords, Google’s systems were more than happy to oblige this sudden generosity.

Without anomaly detection, this error would have gone unnoticed until the finance department reconciled the credit card statement 30 days later. However, the company’s real-time monitoring script—running on a 30-minute interval—detected a spend velocity that was 1,200% above the rolling 7-day average. The script triggered an emergency “Pause All” command and sent a high-priority alert to the team’s Slack. Total time from error to resolution: 42 minutes. Total waste: $450. Potential waste: $50,000.

>Future Horizons: Predictive Anomaly Detection

We are currently transitioning from reactive detection to predictive prevention. The next generation of anomaly detection tools will use Generative AI and Large Language Models (LLMs) to not only identify a spike but also provide an instant linguistic analysis of the “why.” Imagine receiving a notification that says: “Spend is up 40% because of a surge in ‘competitor X’ brand terms; however, CVR is down 10% because your landing page is returning a 404 error in the Berlin region.”

Furthermore, we are seeing the rise of Ad-Exchange Forensics. By analyzing the packet-level data of ad requests, real-time systems can identify the digital fingerprints of known botnets before the bid is even placed. This shifts the strategy from “reclaiming wasted budget” to “never spending it in the first place.”

>Conclusion: The Vigilance Dividend

In the digital age, waste is not an inevitability; it is a choice. Every dollar lost to a bot, a broken pixel, or a manual error is a dollar that could have been used to reach a genuine customer, test a new creative, or expand into a new market. Anomaly detection is the technological manifestation of the “Vigilance Dividend”—the competitive advantage gained by those who refuse to let their budgets bleed out in the dark.

As the complexity of the ad-tech ecosystem continues to scale, the human eye will become increasingly inadequate as a primary defense. Embracing the analytical rigor of real-time monitoring is no longer a luxury for the data-obsessed; it is a fundamental requirement for the fiscally responsible. The question is no longer whether you can afford to implement anomaly detection, but whether you can afford the catastrophic cost of its absence.

Are you watching your metrics, or is your budget watching you?